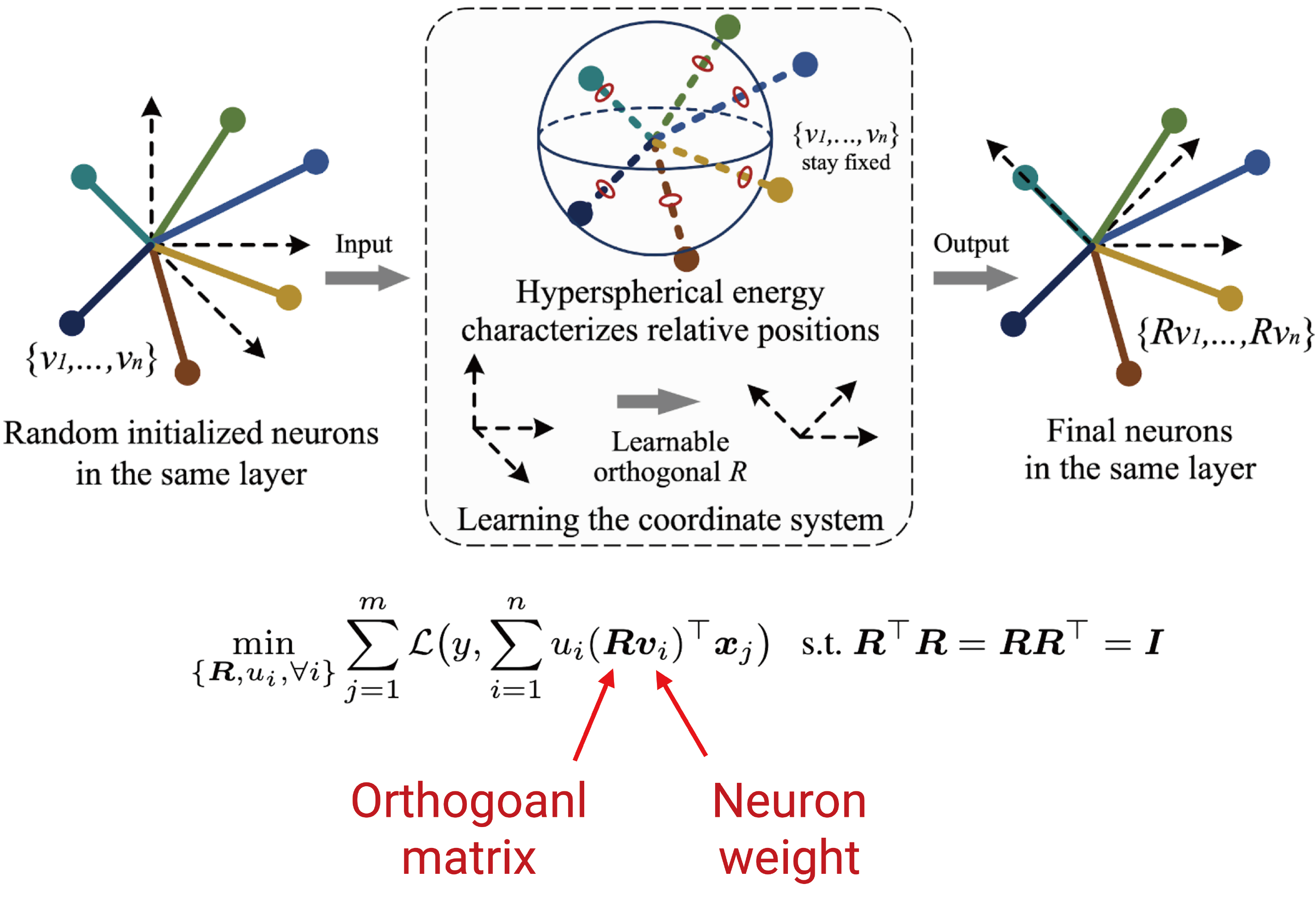

The inductive bias of a neural network is largely determined by the architecture and the training algorithm. To achieve good generalization, how to effectively train a neural network is of great importance. We propose a novel orthogonal over-parameterized training (OPT) framework that can provably minimize the hyperspherical energy which characterizes the diversity of neurons on a hypersphere. By maintaining the minimum hyperspherical energy during training, OPT can greatly improve the network generalization. Specifically, OPT fixes the randomly initialized weights of the neurons and learns an orthogonal transformation that applies to these neurons. We propose multiple ways to learn such an orthogonal transformation, including unrolling orthogonalization algorithms, applying orthogonal parameterization, and designing orthogonality-preserving gradient descent. Interestingly, OPT reveals that learning a proper coordinate system for neurons is crucial to generalization and may be more important than learning specific relative positions among neurons. We provide some insights on why OPT yields better generalization. Extensive experiments validate the superiority of OPT.

Overview of the Algorithm

Paper and Bibtex

|

|

| [ArXiv] [Bibtex] |

@InProceedings{Liu2021OPT,

title={Orthogonal Over-Parameterized Training},

author={Liu, Weiyang and Lin, Rongmei and Liu, Zhen and Rehg, James M. and Paull, Liam

and Xiong, Li and Song, Le and Weller, Adrian},

booktitle={CVPR},

year={2021}}

}

|